The Many Cases of Insider Trading on Polymarket

High-profile cases of suspected insider trading are becoming a constant reality on Polymarket. These stories cover everything from leaked industry reports to classified state secrets. But Polymarket isn’t worried about them: to this platform, insider knowledge is a feature, not a bug.

2025 ended with a major scandal. A Polymarket trader profited $1 million in 24 hours from trades related to Google’s 2025 Year in Search rankings. The trader, going by the pseudonym AlphaRaccoon, had an astonishing success rate, with 22 out of 23 predictions paying off.

The trend immediately raised red flags. The accuracy was statistically highly improbable, and the massive size of the positions led observers to label it a clear case of insider trading. Could it have been an employee at Google with knowledge of the search trends report before it was released?

Trades placed by the account AlphaRaccoon on Polymarket

Trades placed by the account AlphaRaccoon on Polymarket

Then, in January 2026, an anonymous trader on Polymarket put $32,000 on the downfall of Venezuelan dictator Nicolás Maduro just hours before President Trump announced his capture. That trader pocketed more than $400,000. But the size of the trade and the timing were simply too good to be true. Experts immediately suspected the trader had insider knowledge of the U.S. military raid in the South American country.

However, the most serious case comes from Israel. Two individuals there have been indicted by the state for using classified military intelligence—state secrets—to profit on Polymarket.

The pair (an Israeli Defense Forces reservist and a civilian) placed highly accurate wagers on military operations last year. This included correctly predicting the exact timeframe in which Israel launched strikes on Iran in June 2025.

They earned over $150,000 in profits. Now, they are facing charges for “severe security offenses,” as well as bribery and obstruction of justice.

Kalshi, a rival prediction market, commented on insider trading when pressed following the Maduro case in January. In a statement released to CBS News New York, the company said:

“Kalshi explicitly prohibits insider trading of any form, including government employees trading on prediction markets related to government activity. We’re looking at the specifics of the bill, but we already ban the activity it cites and are in support of means to prevent this type of activity. Market integrity is integral to the functioning of any US regulated exchange. Activity from the past few days has occurred on an unregulated exchange.”

Both Kalshi and Polymarket are now federally regulated by the Commodity Futures Trading Commission (CFTC). Polymarket secured this approval in late 2025. Under this regulation, both platforms use traditional stock-market surveillance. They also set strict position limits to prevent users from trading on non-public information.

But for Polymarket, this feels more like ticking boxes than actively tackling the problem.

In fact, Polymarket treats insider trading as a feature, not a bug. In a recent interview, Polymarket CEO Shayne Coplan defended the practice of trading on non-public information, telling CBS News that insiders “having an edge on the market is a good thing.”

Coplan has repeatedly argued that allowing insiders to profit speeds up the discovery of the truth, noting that the platform “creates this financial incentive for people to go and divulge the information to the market.”

Polymarket CEO Shayne Coplan

Polymarket CEO Shayne Coplan

The difference between the two platforms is fundamental. Kalshi operates as a traditional, centralized financial exchange, much like a stock market. It runs on fiat US dollars and requires identity verification.

Polymarket, on the other hand, is a decentralized platform built on the Polygon blockchain. It runs exclusively on cryptocurrency (USDC), allowing users to connect and trade via Web3 wallets. Polymarket is highly pseudonymous. Naturally, that level of anonymity makes it the platform of choice for anyone wanting to hide their identity, such as someone trading on non-public information.

In response, Representative Ritchie Torres introduced the Public Integrity in Financial Prediction Markets Act of 2026. The bill seeks to outlaw wagering on prediction markets by members of Congress and other insiders.

It aims to: “prohibit federal elected officials, political appointees, Executive Branch employees, and congressional staff from buying, selling, or exchanging prediction market contracts tied to government policy.”

Kalshi has responded to media requests regarding the bill, confirming they support it. Polymarket, on the other hand, has not.

Despite the controversy, the federal government currently shields these platforms. Michael Selig, the recently appointed Chair of the CFTC, has come out in vocal support of these platforms. In the face of a growing onslaught of state-level lawsuits attempting to ban the sites as unlicensed gambling, the CFTC has stepped in to protect them, warning states that prediction markets are federally regulated financial tools, not local sportsbooks.

Speaking of this support, Polymarket CEO Copland has said: “This [administration] is very pro-innovation, and pro-crypto, and pro-Polymarket, which is amazing. I need help navigating that, right? I’m a young entrepreneur.”

So it is hard to see Polymarket being reined in for the time being. The administration in the United States is the most pro-crypto ever. But worldwide, they will certainly face further scrutiny over the ever-increasing cases of insider trading on Polymarket.

The post The Many Cases of Insider Trading on Polymarket appeared first on BitcoinChaser.

You May Also Like

The Stunning ASEAN Winner Emerges As Manufacturing Shifts Accelerate

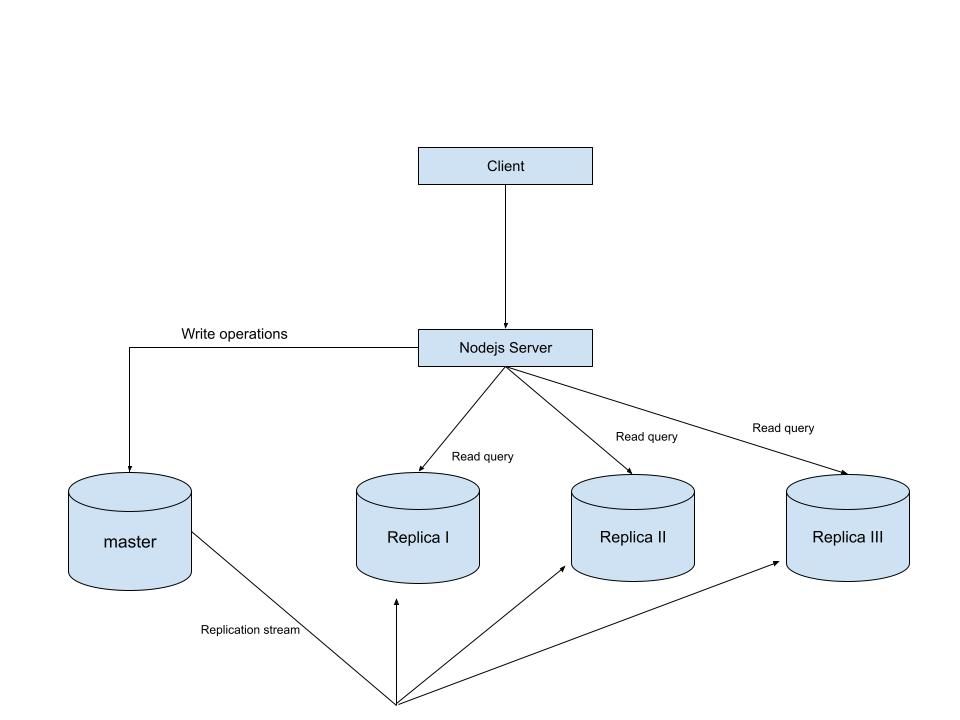

MySQL Single Leader Replication with Node.js and Docker

command: --server-id=1 --log-bin=ON The --server-id option gives each MySQL server in your replication setup its own name tag. Each one has to be unique and without it, replication won’t work at all. Another cool option not included here is binlog_format=ROW. This tells MySQL how to keep track of changes before passing them along to the replicas. By default, MySQL already uses row-based replication, but you can explicitly set it to ROW to be sure or switch it to STATEMENT if you’d rather log the actual SQL statements instead of row-by-row changes. \ Run our containers on docker Now, in the terminal, we can run the following command to spin up our database containers: docker-compose up -d \ Setting Up Our Master (Primary) Server To configure our master server, we would have to first access the running instance on docker using the following command docker exec -it mysql-master bash This command opens an interactive Bash shell inside the running Docker container named mysql-master, allowing us to run commands directly inside that container. \ Now that we’re inside the container, we can access the MySQL server and start running commands. type: mysql -uroot -p This will log you into MySQL as the root user. You’ll be prompted to enter the password you set in your docker-compose.yml file. \ Next, we need to create a special user that our replicas will use to connect to the master server and pull data. Inside the MySQL prompt, run the following commands: \ CREATE USER 'repl_user'@'%' IDENTIFIED BY 'replication_pass'; GRANT REPLICATION SLAVE ON . TO 'repl_user'@'%'; FLUSH PRIVILEGES; Here’s what’s happening: CREATE USER makes a new MySQL user called repl_user with the password replication_pass. GRANT REPLICATION SLAVE gives this user permission to act as a replication client. FLUSH PRIVILEGES tells MySQL to reload the user permissions so they take effect immediately. \ Time to Configure the Replica (Secondary) Servers a. First, let’s access the replica containers the same way we did with the master. Run this command in your terminal for each of the replica containers: \ docker exec -it <replica_container_name> bash mysql -uroot -p <replica_container_name> should be replace with the name of the replica container you are trying to setup b. Now it’s time to tell our replica where to get its data from. While inside the replica’s MySQL shell, run the following command to configure replication using the master’s details: CHANGE REPLICATION SOURCE TO SOURCE_HOST='mysql-master', SOURCE_USER='repl_user', SOURCE_PASSWORD='replication_pass', GET_SOURCE_PUBLIC_KEY=1; With the replication settings in place, let’s fire up the replica and get it syncing with the master. Still inside the MySQL shell on the replica, run: START REPLICA; This starts the replication process. To make sure everything is working, check the replica’s status with:

SHOW REPLICA STATUS\G; Look for Replica_IO_Running and Replica_SQL_Running — if both say Yes, congratulations! 🎉 Your replica is now successfully connected to the master and replicating data in real time.

Testing Our Replication Setup from the Node.js App Now that our replication is successfully set up, we can configure our Node.js server to observe the real-time effect of data being replicated from the master server to the replica server whenever we write to it. We start by installing the following dependencies:

npm i express mysql2 sequelize \ Now create a folder called src in the root directory and add the following files inside that folder connection.js, index.js and model.js. Our current directory should look like this We can now set up our connections to our master and replica server in the connection.js file as shown below

const Sequelize = require("sequelize"); const sequelize = new Sequelize({ dialect: "mysql", replication: { write: { host: "127.0.0.1", username: "root", password: "master", database: "replicaDb", }, read: [ { host: "127.0.0.1", username: "root", password: "slave", database: "replicaDb", port: 3307 }, { host: "127.0.0.1", username: "root", password: "slave", database: "replicaDb", port: 3308 }, { host: "127.0.0.1", username: "root", password: "slave", database: "replicaDb", port: 3309 }, ], }, }); async function connectdb() { try { await sequelize.authenticate(); } catch (error) { console.error("❌ unable to connect to the follower database", error); } } connectdb(); module.exports = { sequelize, }; \ We can now create a User table in the model.js file

const {DataTypes} = require("sequelize"); const { sequelize } = require("./connection"); const User = sequelize.define("User", { name: { type: DataTypes.STRING, allowNull: false, }, email: { type: DataTypes.STRING, unique: true, allowNull: false, }, }); module.exports = User \ and finally in our index.js file we can start our server and listen for connections on port 3000. from the code sample below, all inserts or updates will be routed by sequelize to the master server. while all read queries will be routed to the read replicas.

const express = require("express"); const { sequelize } = require("./connection"); const User = require("./model"); const app = express(); app.use(express.json()); async function main() { await sequelize.sync({ alter: true }); app.get("/", (req, res) => { res.status(200).json({ message: "first step to setting server up", }); }); app.post("/user", async (req, res) => { const { email, name } = req.body; let newUser = await User.build({ name, email, }); // This INSERT will go to the write (master) connection newUser = newUser.save({ returning: false }); res.status(201).json({ message: "User successfully created", }); }); app.get("/user", async (req, res) => { // This SELECT query will go to one of the read replicas const users = await User.findAll(); res.status(200).json(users); }); app.listen(3000, () => { console.log("server has connected"); }); } main(); When you make a POST request to the /users endpoint, take a moment to check both the master and replica servers to observe how data is replicated in real time. Right now, we are relying on Sequelize to automatically route requests, which works for development but isn’t robust enough for a production environment. In particular, if the master node goes down, Sequelize cannot automatically redirect requests to a newly elected leader. In the next part of this series, we’ll explore strategies to handle these challenges