Pharos Network Unveils RealFi Alliance Ahead of Mainnet Launch

Pharos Network has launched the RealFi Alliance, a new ecosystem push that aims to make institutional real world assets work smoothly onchain as the network heads toward its mainnet debut.

Key Takeaways

- Pharos Network announced the RealFi Alliance to bring issuers, infrastructure providers, and builders into one shared execution framework for RWAs.

- The inaugural cohort includes Chainlink, Centrifuge, LayerZero, Re7 Labs, Ember, and several other ecosystem players.

- The Alliance targets major friction points like fragmented liquidity, inconsistent standards, and compliance gaps that slow institutional adoption.

- Pharos says the effort is designed so assets are not just tokenized but also active, composable, and usable in real workflows.

What Happened?

Pharos Network said it has formed the RealFi Alliance, an ecosystem initiative meant to coordinate institutional asset issuance, infrastructure, and applications around a shared onchain standard. The company positioned it as a step toward moving the RWA market from disconnected tests into a scalable execution model.

Pharos Wants RWAs to Act Like Infrastructure, Not Collectibles

In the RWA space, plenty of projects can tokenize an asset, but far fewer can make that asset useful across lending, yield, payments, custody, and compliance without breaking into one off systems. Pharos is pitching RealFi as the missing layer that turns tokenized assets into something closer to institutional financial plumbing.

Instead of treating RWAs as static tokens that sit in a wallet, Pharos says the Alliance is built around the idea that assets should stay active and composable, meaning they can move through different protocols and workflows without constant rewiring. The goal is to create an environment where institutions can issue assets, manage risk, access liquidity, and meet compliance requirements inside one coordinated framework.

Who Is in the RealFi Alliance Cohort?

Pharos said the inaugural RealFi Alliance cohort includes: Chainlink, Asseto Finance, Ember, Faroo, LayerZero, R25, Re7 Labs, TopNod, and Centrifuge.

That mix matters. Chainlink brings oracle infrastructure that many institutions already view as the default standard for onchain data. Centrifuge is known for real world asset financing and tokenization rails. LayerZero adds crosschain messaging, which is often essential if assets need to move or be referenced across multiple networks. Pharos is also highlighting participation from asset and strategy groups such as Ember and Re7 Labs, which it says supports a more end to end approach, from issuance through vault design and risk controls.

Four Pillars Designed to Reduce Friction

Pharos described the Alliance as operating across four pillars aimed at long term adoption and system integrity.

First is asset enablement, focused on getting real value onchain in secure and composable formats built for sustained participation, not short demos.

Second is infrastructure and compliance alignment. Pharos says this pillar leverages its deep parallel execution and built in compliance modules to meet institutional grade standards. The message is clear: institutions will not scale if every asset has a different security model, and if compliance needs to be bolted on later.

Third is liquidity and utility design, which is about making sure assets can actually be used. Pharos points to pathways such as staking, yield, and application integration. The network highlighted a collaboration between Ember and Re7 Labs, where institutional grade risk management and vault curation are integrated directly into the asset lifecycle.

Fourth is market transparency and benchmarks. This is where Pharos wants clearer visibility into risk and yield sources, which it says is critical for sophisticated allocators who need repeatable standards before deploying capital.

A Mainnet Launch With Partners Already Building

Pharos framed the Alliance as a way to ensure its upcoming mainnet launches as a ready to use environment rather than an empty chain waiting for liquidity. The company said the Alliance will expand in structured batches over time, with future members selected based on asset quality, technical readiness, and ecosystem alignment.

Wish Wu, Co Founder and CEO of Pharos Network, said the key problem is not the lack of tokenized assets but the lack of a unified environment where they can function at scale. Wu added that the RealFi Alliance is meant to align leaders like Chainlink with specialized operators so real value can move onchain with institutional grade reliability.

Pharos also described itself as an inclusive financial Layer 1 for RealFi, combining modular architecture, deep parallel execution, and built in compliance. The project says it was built by leadership and engineers from Ant Group and is backed by Hack VC, Faction VC, and other investors.

CoinLaw’s Takeaway

I have seen the RWA conversation get stuck in the same loop for years: tokenize something, run a pilot, then hit the wall when liquidity, compliance, and real usage do not line up. What I like about the RealFi Alliance framing is that it is not pretending tokenization alone solves anything. If Pharos can actually ship a mainnet where oracles, crosschain plumbing, asset operators, and risk managers are already working under shared standards, that is when institutions start paying attention. In my experience, institutions do not want fifty bespoke integrations. They want a reliable system that feels boring in the best possible way. If this Alliance delivers that, it could push RWAs from marketing demos into real onchain finance.

The post Pharos Network Unveils RealFi Alliance Ahead of Mainnet Launch appeared first on CoinLaw.

You May Also Like

The Stunning ASEAN Winner Emerges As Manufacturing Shifts Accelerate

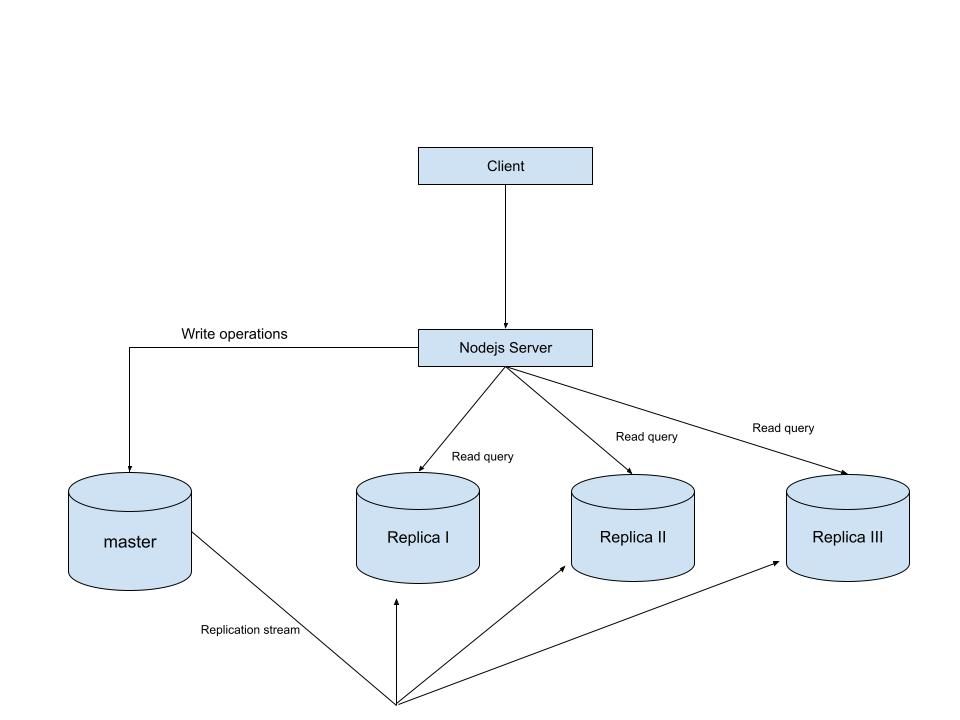

MySQL Single Leader Replication with Node.js and Docker

command: --server-id=1 --log-bin=ON The --server-id option gives each MySQL server in your replication setup its own name tag. Each one has to be unique and without it, replication won’t work at all. Another cool option not included here is binlog_format=ROW. This tells MySQL how to keep track of changes before passing them along to the replicas. By default, MySQL already uses row-based replication, but you can explicitly set it to ROW to be sure or switch it to STATEMENT if you’d rather log the actual SQL statements instead of row-by-row changes. \ Run our containers on docker Now, in the terminal, we can run the following command to spin up our database containers: docker-compose up -d \ Setting Up Our Master (Primary) Server To configure our master server, we would have to first access the running instance on docker using the following command docker exec -it mysql-master bash This command opens an interactive Bash shell inside the running Docker container named mysql-master, allowing us to run commands directly inside that container. \ Now that we’re inside the container, we can access the MySQL server and start running commands. type: mysql -uroot -p This will log you into MySQL as the root user. You’ll be prompted to enter the password you set in your docker-compose.yml file. \ Next, we need to create a special user that our replicas will use to connect to the master server and pull data. Inside the MySQL prompt, run the following commands: \ CREATE USER 'repl_user'@'%' IDENTIFIED BY 'replication_pass'; GRANT REPLICATION SLAVE ON . TO 'repl_user'@'%'; FLUSH PRIVILEGES; Here’s what’s happening: CREATE USER makes a new MySQL user called repl_user with the password replication_pass. GRANT REPLICATION SLAVE gives this user permission to act as a replication client. FLUSH PRIVILEGES tells MySQL to reload the user permissions so they take effect immediately. \ Time to Configure the Replica (Secondary) Servers a. First, let’s access the replica containers the same way we did with the master. Run this command in your terminal for each of the replica containers: \ docker exec -it <replica_container_name> bash mysql -uroot -p <replica_container_name> should be replace with the name of the replica container you are trying to setup b. Now it’s time to tell our replica where to get its data from. While inside the replica’s MySQL shell, run the following command to configure replication using the master’s details: CHANGE REPLICATION SOURCE TO SOURCE_HOST='mysql-master', SOURCE_USER='repl_user', SOURCE_PASSWORD='replication_pass', GET_SOURCE_PUBLIC_KEY=1; With the replication settings in place, let’s fire up the replica and get it syncing with the master. Still inside the MySQL shell on the replica, run: START REPLICA; This starts the replication process. To make sure everything is working, check the replica’s status with:

SHOW REPLICA STATUS\G; Look for Replica_IO_Running and Replica_SQL_Running — if both say Yes, congratulations! 🎉 Your replica is now successfully connected to the master and replicating data in real time.

Testing Our Replication Setup from the Node.js App Now that our replication is successfully set up, we can configure our Node.js server to observe the real-time effect of data being replicated from the master server to the replica server whenever we write to it. We start by installing the following dependencies:

npm i express mysql2 sequelize \ Now create a folder called src in the root directory and add the following files inside that folder connection.js, index.js and model.js. Our current directory should look like this We can now set up our connections to our master and replica server in the connection.js file as shown below

const Sequelize = require("sequelize"); const sequelize = new Sequelize({ dialect: "mysql", replication: { write: { host: "127.0.0.1", username: "root", password: "master", database: "replicaDb", }, read: [ { host: "127.0.0.1", username: "root", password: "slave", database: "replicaDb", port: 3307 }, { host: "127.0.0.1", username: "root", password: "slave", database: "replicaDb", port: 3308 }, { host: "127.0.0.1", username: "root", password: "slave", database: "replicaDb", port: 3309 }, ], }, }); async function connectdb() { try { await sequelize.authenticate(); } catch (error) { console.error("❌ unable to connect to the follower database", error); } } connectdb(); module.exports = { sequelize, }; \ We can now create a User table in the model.js file

const {DataTypes} = require("sequelize"); const { sequelize } = require("./connection"); const User = sequelize.define("User", { name: { type: DataTypes.STRING, allowNull: false, }, email: { type: DataTypes.STRING, unique: true, allowNull: false, }, }); module.exports = User \ and finally in our index.js file we can start our server and listen for connections on port 3000. from the code sample below, all inserts or updates will be routed by sequelize to the master server. while all read queries will be routed to the read replicas.

const express = require("express"); const { sequelize } = require("./connection"); const User = require("./model"); const app = express(); app.use(express.json()); async function main() { await sequelize.sync({ alter: true }); app.get("/", (req, res) => { res.status(200).json({ message: "first step to setting server up", }); }); app.post("/user", async (req, res) => { const { email, name } = req.body; let newUser = await User.build({ name, email, }); // This INSERT will go to the write (master) connection newUser = newUser.save({ returning: false }); res.status(201).json({ message: "User successfully created", }); }); app.get("/user", async (req, res) => { // This SELECT query will go to one of the read replicas const users = await User.findAll(); res.status(200).json(users); }); app.listen(3000, () => { console.log("server has connected"); }); } main(); When you make a POST request to the /users endpoint, take a moment to check both the master and replica servers to observe how data is replicated in real time. Right now, we are relying on Sequelize to automatically route requests, which works for development but isn’t robust enough for a production environment. In particular, if the master node goes down, Sequelize cannot automatically redirect requests to a newly elected leader. In the next part of this series, we’ll explore strategies to handle these challenges