Crypto.com Wins OCC Approval for National Trust Bank

Crypto.com has received conditional approval from the United States Office of the Comptroller of the Currency to establish a federally regulated national trust bank.

Key Takeaways

- Crypto.com secured conditional approval from the OCC to charter Foris Dax National Trust Bank, doing business as Crypto.com National Trust Bank.

- The planned entity will offer digital asset custody, staking, and trade settlement services under federal oversight.

- The charter strengthens Crypto.com’s position as a qualified custodian for institutional clients, including ETF issuers and asset managers.

- Final approval is still pending, but the move signals growing federal engagement with crypto firms.

What Happened?

Crypto.com announced that it has received conditional approval from the Office of the Comptroller of the Currency to establish Foris Dax National Trust Bank, which will operate as Crypto.com National Trust Bank once fully approved. The company first submitted its application to the OCC in October 2025.

The new entity would function as a limited purpose national trust bank. It would not accept deposits or issue loans, but would focus on digital asset services such as custody, staking, and trade settlement.

A Major Step Toward Federal Oversight

With this conditional approval, Crypto.com moves closer to operating under a single federal regulatory framework for its institutional custody services. Once fully approved, Crypto.com National Trust Bank will operate under direct OCC supervision.

The proposed bank will provide:

- Custody of digital assets

- Staking services across various blockchains and digital asset protocols, including Cronos

- Trade settlement services for digital assets

By securing a national trust charter, Crypto.com aims to consolidate its custodial offerings under federal oversight rather than relying solely on state level regulation.

Currently, Crypto.com operates Crypto.com Custody Trust Company, which is regulated by the New Hampshire Banking Department as a non depository trust company. The company clarified that the OCC’s conditional approval does not affect the continued operations of this entity.

Why This Matters for Institutions?

Institutional investors such as ETF issuers and asset managers often prefer working with custodians that operate under national oversight. A federal framework can streamline compliance, simplify operational processes, and provide a higher level of regulatory clarity.

The national trust charter offers what Crypto.com describes as a one stop shop qualified custodian structure for trust services. However, it will not function as a traditional bank. The institution will not accept customer deposits or provide lending services.

In a statement, Kris Marszalek, Co Founder and CEO of Crypto.com, said:

His remarks highlight the company’s strategy to position itself as a trusted infrastructure provider for large scale institutional participation in digital assets.

Part of a Broader Federal Trend

Crypto.com is not alone in pursuing a federal trust charter. In recent months, firms including Ripple, Circle, BitGo, Paxos, and Fidelity Digital Assets have received similar conditional approvals from the OCC. Last week, Stripe’s stablecoin firm Bridge also won initial approval to form a national trust bank.

These approvals reflect a broader shift as crypto firms seek to align more closely with federal regulators. The OCC has recently clarified that United States banks can engage in certain crypto related activities, reducing compliance burdens and creating a more defined pathway for digital asset services.

While conditional approval does not represent final authorization, it is a significant regulatory milestone. Final approval will depend on the company meeting all requirements set by the OCC.

CoinLaw’s Takeaway

In my experience covering crypto regulation, federal oversight changes the game. I believe this move gives Crypto.com a stronger footing with serious institutional players who care deeply about compliance and custody standards. A national trust charter is not just a badge of honor. It is a signal that the company is willing to operate under stricter scrutiny.

I found that institutions often hesitate when regulatory structures are unclear. By moving under OCC supervision, Crypto.com is addressing that hesitation directly. If final approval comes through, this could significantly strengthen its role as a trusted custodian in the United States market.

The post Crypto.com Wins OCC Approval for National Trust Bank appeared first on CoinLaw.

You May Also Like

The Stunning ASEAN Winner Emerges As Manufacturing Shifts Accelerate

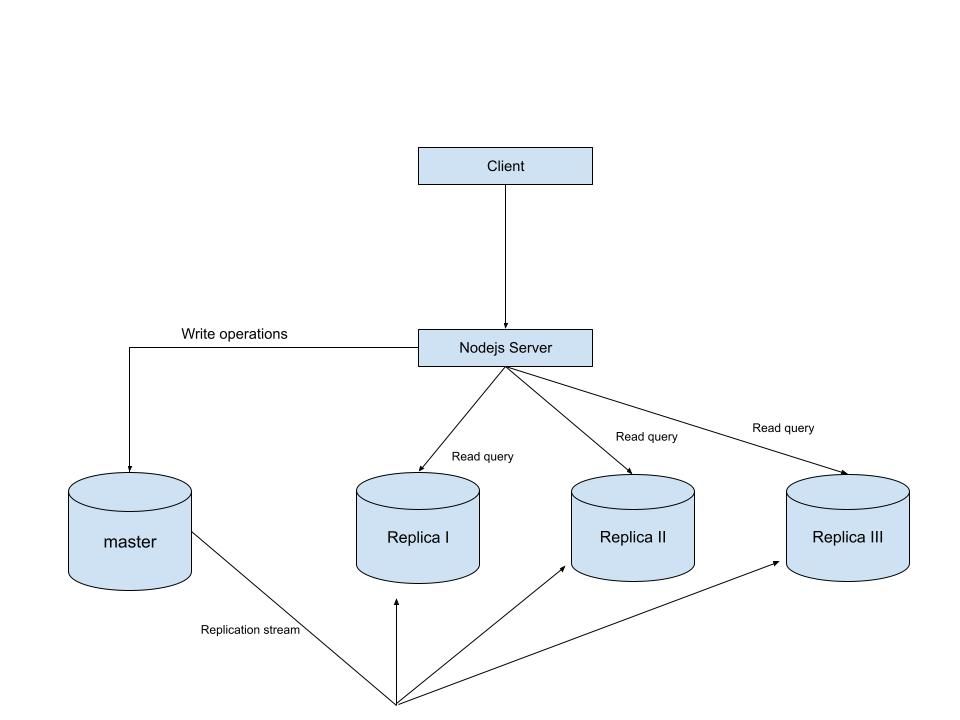

MySQL Single Leader Replication with Node.js and Docker

command: --server-id=1 --log-bin=ON The --server-id option gives each MySQL server in your replication setup its own name tag. Each one has to be unique and without it, replication won’t work at all. Another cool option not included here is binlog_format=ROW. This tells MySQL how to keep track of changes before passing them along to the replicas. By default, MySQL already uses row-based replication, but you can explicitly set it to ROW to be sure or switch it to STATEMENT if you’d rather log the actual SQL statements instead of row-by-row changes. \ Run our containers on docker Now, in the terminal, we can run the following command to spin up our database containers: docker-compose up -d \ Setting Up Our Master (Primary) Server To configure our master server, we would have to first access the running instance on docker using the following command docker exec -it mysql-master bash This command opens an interactive Bash shell inside the running Docker container named mysql-master, allowing us to run commands directly inside that container. \ Now that we’re inside the container, we can access the MySQL server and start running commands. type: mysql -uroot -p This will log you into MySQL as the root user. You’ll be prompted to enter the password you set in your docker-compose.yml file. \ Next, we need to create a special user that our replicas will use to connect to the master server and pull data. Inside the MySQL prompt, run the following commands: \ CREATE USER 'repl_user'@'%' IDENTIFIED BY 'replication_pass'; GRANT REPLICATION SLAVE ON . TO 'repl_user'@'%'; FLUSH PRIVILEGES; Here’s what’s happening: CREATE USER makes a new MySQL user called repl_user with the password replication_pass. GRANT REPLICATION SLAVE gives this user permission to act as a replication client. FLUSH PRIVILEGES tells MySQL to reload the user permissions so they take effect immediately. \ Time to Configure the Replica (Secondary) Servers a. First, let’s access the replica containers the same way we did with the master. Run this command in your terminal for each of the replica containers: \ docker exec -it <replica_container_name> bash mysql -uroot -p <replica_container_name> should be replace with the name of the replica container you are trying to setup b. Now it’s time to tell our replica where to get its data from. While inside the replica’s MySQL shell, run the following command to configure replication using the master’s details: CHANGE REPLICATION SOURCE TO SOURCE_HOST='mysql-master', SOURCE_USER='repl_user', SOURCE_PASSWORD='replication_pass', GET_SOURCE_PUBLIC_KEY=1; With the replication settings in place, let’s fire up the replica and get it syncing with the master. Still inside the MySQL shell on the replica, run: START REPLICA; This starts the replication process. To make sure everything is working, check the replica’s status with:

SHOW REPLICA STATUS\G; Look for Replica_IO_Running and Replica_SQL_Running — if both say Yes, congratulations! 🎉 Your replica is now successfully connected to the master and replicating data in real time.

Testing Our Replication Setup from the Node.js App Now that our replication is successfully set up, we can configure our Node.js server to observe the real-time effect of data being replicated from the master server to the replica server whenever we write to it. We start by installing the following dependencies:

npm i express mysql2 sequelize \ Now create a folder called src in the root directory and add the following files inside that folder connection.js, index.js and model.js. Our current directory should look like this We can now set up our connections to our master and replica server in the connection.js file as shown below

const Sequelize = require("sequelize"); const sequelize = new Sequelize({ dialect: "mysql", replication: { write: { host: "127.0.0.1", username: "root", password: "master", database: "replicaDb", }, read: [ { host: "127.0.0.1", username: "root", password: "slave", database: "replicaDb", port: 3307 }, { host: "127.0.0.1", username: "root", password: "slave", database: "replicaDb", port: 3308 }, { host: "127.0.0.1", username: "root", password: "slave", database: "replicaDb", port: 3309 }, ], }, }); async function connectdb() { try { await sequelize.authenticate(); } catch (error) { console.error("❌ unable to connect to the follower database", error); } } connectdb(); module.exports = { sequelize, }; \ We can now create a User table in the model.js file

const {DataTypes} = require("sequelize"); const { sequelize } = require("./connection"); const User = sequelize.define("User", { name: { type: DataTypes.STRING, allowNull: false, }, email: { type: DataTypes.STRING, unique: true, allowNull: false, }, }); module.exports = User \ and finally in our index.js file we can start our server and listen for connections on port 3000. from the code sample below, all inserts or updates will be routed by sequelize to the master server. while all read queries will be routed to the read replicas.

const express = require("express"); const { sequelize } = require("./connection"); const User = require("./model"); const app = express(); app.use(express.json()); async function main() { await sequelize.sync({ alter: true }); app.get("/", (req, res) => { res.status(200).json({ message: "first step to setting server up", }); }); app.post("/user", async (req, res) => { const { email, name } = req.body; let newUser = await User.build({ name, email, }); // This INSERT will go to the write (master) connection newUser = newUser.save({ returning: false }); res.status(201).json({ message: "User successfully created", }); }); app.get("/user", async (req, res) => { // This SELECT query will go to one of the read replicas const users = await User.findAll(); res.status(200).json(users); }); app.listen(3000, () => { console.log("server has connected"); }); } main(); When you make a POST request to the /users endpoint, take a moment to check both the master and replica servers to observe how data is replicated in real time. Right now, we are relying on Sequelize to automatically route requests, which works for development but isn’t robust enough for a production environment. In particular, if the master node goes down, Sequelize cannot automatically redirect requests to a newly elected leader. In the next part of this series, we’ll explore strategies to handle these challenges